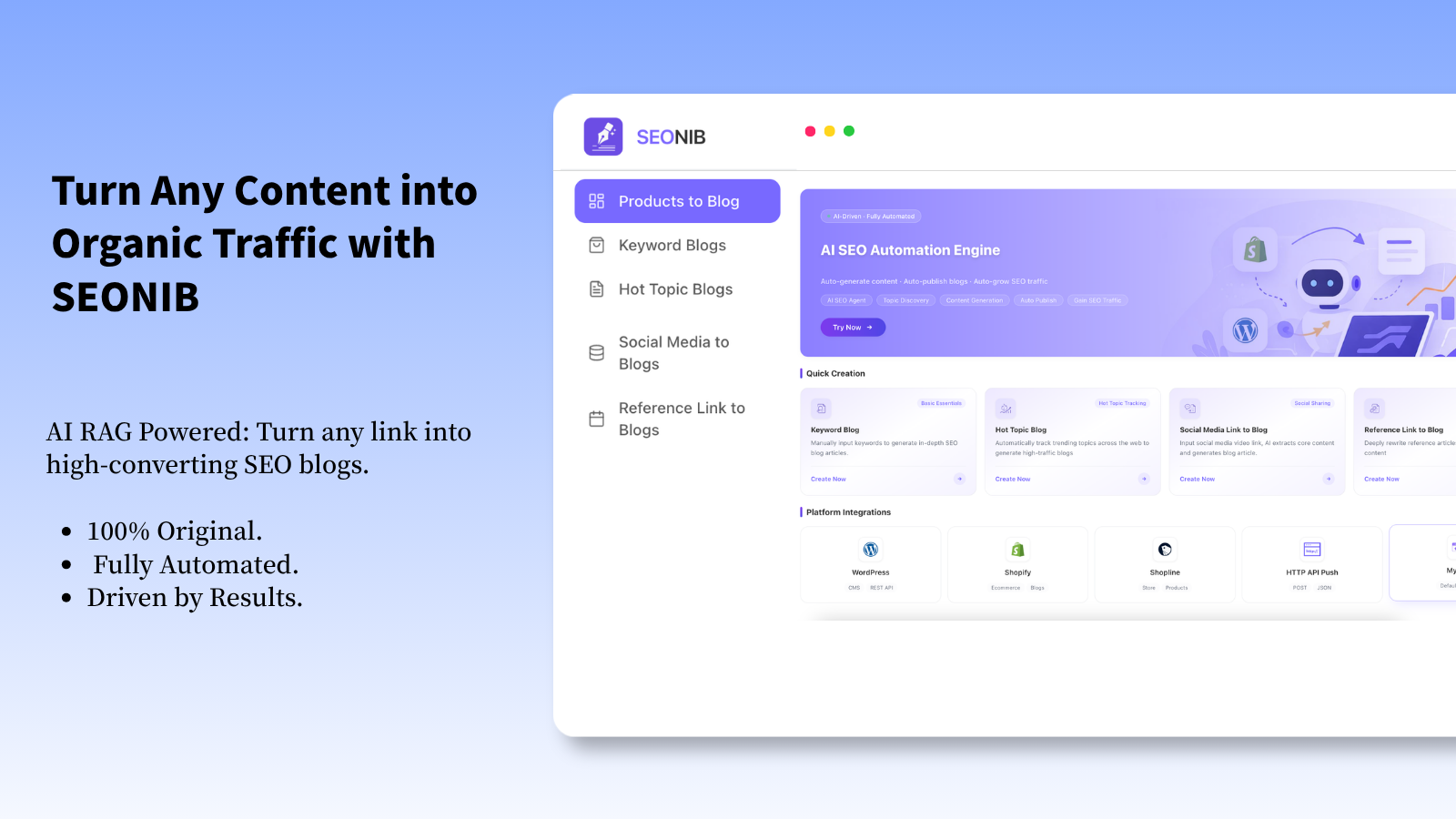

SEONIB's Deep RAG Architecture: When Your AI Stops “Spouting Nonsense”, Traffic Comes

In 2026, if you’re still using an AI that only “spouts nonsense in a very serious tone” to write blogs, your site traffic is likely as precarious as your hairline. Especially now that Google’s SGE (Search Generative Experience) has become mainstream, search‑engine AIs have become “picky.” They no longer settle for keyword stuffing; they act like demanding food critics, recommending only content that is factually accurate, logically clear, and information‑dense.

That’s why, after several rounds of traffic crashes caused by “AI hallucinations,” our team started seriously researching RAG (Retrieval‑Augmented Generation) architectures. It wasn’t when we integrated the SEONIB system into our workflow that we truly understood what it means to “teach AI how to look up information.”

Deep RAG Is Not “Shell Search,” It Gives AI a “Fact‑Checking Officer”

Many tools claim to have RAG capabilities, but the experience is like giving a fast‑talking person a hearing‑impaired assistant—he hears, but not fully, and keeps rambling. SEONIB’s deep RAG architecture is different; its retrieval is not a decorative prelude but a core constraint of the generation process.

We ran a comparative test. We asked a baseline GPT model and an engine equipped with SEONIB’s deep RAG to write an article on “The Impact of Quantum Computing on SEO in 2026.” The baseline model fabricated several “latest breakthroughs from industry giants” that sounded impressive but were entirely false. The version generated by SEONIB referenced company updates, paper progress, and expert opinions that could be traced back to real sources from the past six months. In the test sandbox, the latter’s SGE snippet exposure rate was nearly 300% higher.

What makes its “deep” aspect special? It doesn’t simply feed the AI the first paragraph of search results. Instead, through multiple layers of semantic understanding, source authority weighting, and fact cross‑verification, it builds a dynamic “knowledge graph” as the generation foundation. This means the AI, before writing, already has the topic’s outline, controversies, and latest developments clarified—like a seasoned researcher.

Optimized for SGE: Become the “Dish” Search‑Engine AI Loves

SEO in 2026 is largely about optimizing the “AI reading experience.” What content structure does Google’s SGE prefer when generating answers? Based on our observations and SEONIB’s backend A/B test data, we found several counter‑intuitive insights:

First, over‑optimizing “traditional SEO elements” can be counterproductive. For example, force‑inserting H2/H3 tags or deliberately creating short paragraphs. SGE’s extraction algorithm favors natural, coherent argumentative logic. Articles generated by SEONIB use heading hierarchy to serve content depth, not just to mark up text. They mimic the structure of a human expert’s deep‑analysis report, which aligns perfectly with the pattern SGE uses to extract information summaries.

Second, the density of facts and the way sources are presented are crucial. SEONIB’s architecture naturally weaves data, dates, and concrete case citations into the article, and uses phrasing such as “according to … report” or “… mentioned in a recent interview” to highlight reliability. This directly boosts the probability that SGE will cite the content as a “trusted snippet.”

When “Pay‑Per‑Article” Meets “Permanent Points”

Talking about cost, this is the most “outrageous” point for our team. Many AI writing tools on the market charge high monthly fees regardless of usage, draining money even when you don’t write. SEONIB’s “non‑subscription, points‑based” model feels like returning to the era of ration tickets—simple but solid.

At as low as $0.199 per article, the output is a long, fact‑solid piece built on the deep RAG architecture. This means you can run large‑scale content tests at minimal cost without fearing budget blowouts. Even more “un‑business‑like” is that the points never expire. This completely changed our content strategy—from “writing to exhaust subscription quotas” to “producing precisely when there’s a clear need,” and the content quality actually improved.

By the way, they now give 8 points upon registration. According to their standards, that’s enough to generate eight deep‑dive blog posts. In other words, you can use the system for free once, go through the whole workflow—from trend discovery, deep research, to generation and publishing—and see how its output differs from those hallucination‑prone AIs. This is probably the least risky way to try it out.

The Final Truth: Tools Eliminate Hallucinations, But Strategy Still Matters

After using SEONIB for a while, we realized a principle: even the best RAG architecture is just an extremely reliable information‑processing and generation engine. It ensures the “foundation” of your content is solid rock, not quicksand. But how tall that “content skyscraper” becomes, what style it takes, and which direction of traffic sunlight it faces still depend on the people operating it.

This tool freed our content team from the grunt work of “fact‑checking” and “data gathering,” allowing us to focus on strategy itself: uncovering unmet search intents, building content clusters, analyzing SGE exposure data, and iterating. It didn’t make SEO easier; it elevated SEO competition to its rightful dimension—strategy and insight versus brute force and hallucination.

FAQ

Q: Can deep RAG completely eliminate AI hallucinations?

A: Based on our experience with SEONIB, within its strictly defined “retrieval‑augmented generation” workflow, factual hallucinations are essentially eliminated. All core claims it generates must be backed by retrieved sources. However, it cannot guarantee that the sources themselves are 100% correct (the internet contains errors), so it provides “generation based on known facts,” not “absolute truth generation.”

Q: For SGE optimization, do we need to completely change our writing style?

A: No need to deliberately “please” the AI. Our observation is that SGE prefers the qualities of high‑quality human expert content: clear logic, solid argumentation, rich information. SEONIB’s optimization simply mimics those qualities. You don’t need to write in a “machine” tone; rather, aim for more “human” and deeper content.

Q: At $0.199 per article, is the length and quality guaranteed?

A: That was our initial concern. In practice, this price corresponds to their standard deep article. Lengths are usually over 1,500 words, with complete structure and high information density. The cost control likely stems from their efficient architecture and on‑demand usage model, not from quality compression.

Q: Points are permanent—what if prices rise in the future?

A: According to their current policy, purchased points retain the rights at the time of purchase. So even if the per‑article price rises later, you can still consume them at the current rate. It’s like locking in content production costs early.

Q: For teams without technical backgrounds, how difficult is integration and use?

A: Almost zero. SEONIB is designed to be “plug‑and‑play.” You don’t need to configure servers or understand RAG theory. The process is similar to using a regular writing assistant: input a topic or keywords, wait for generation, review, and publish. All technical complexity is encapsulated in the backend.